Augmentation vs Automation in Education: What the Chart Reveals

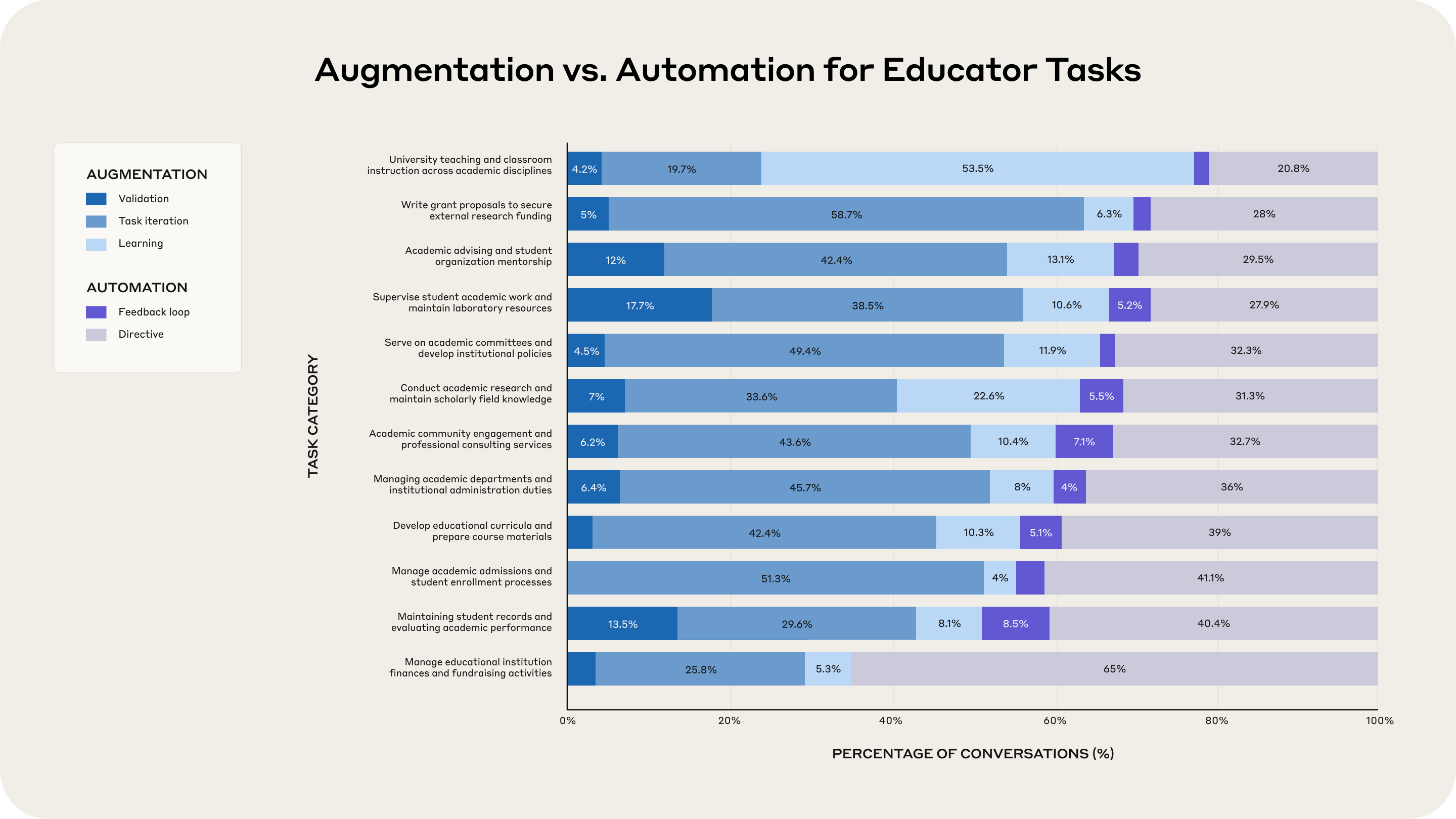

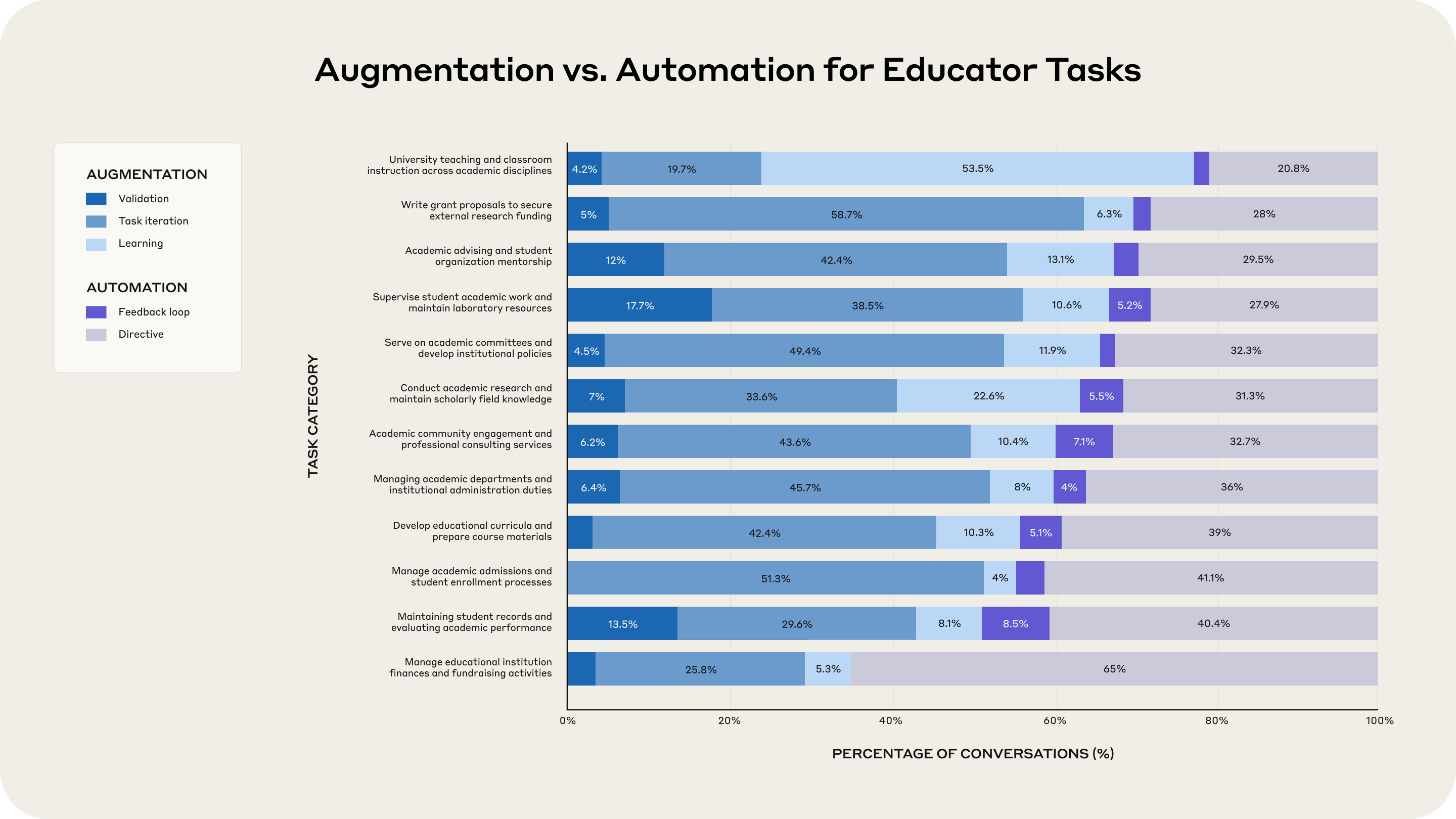

Educators are not just experimenting with AI. They are quietly standardizing it across planning, research, and administration. A new dataset from Anthropic maps where faculty collaborate with AI and where they hand work off entirely. The short version. Teaching and advising are mostly augmented. Record keeping and finance lean toward automation. Grading shows a split that will keep ethics committees busy. anthropic.com

Augmentation dominates student facing work. Automation concentrates in back office tasks. Image adapted from Anthropic’s report.

Key takeaways that matter for policy and practice

Most faculty use AI to build curricula and materials

Curriculum development is the leading use case in Anthropic’s sample, followed by academic research and assessing performance. That matches what we see in the field. Faculty want help drafting lesson outlines, practice problems, and rubrics, while keeping control over the final output.

Teaching and mentoring are augmented, not replaced

Tasks that depend on context and judgment show a strong bias toward collaboration with AI. Lesson design, student advising, supervision of academic work, and grant writing all skew toward augmentation. This is where AI acts like a thinking partner rather than an auto pilot.

Administrative work skews to automation

Financial planning, fundraising, admissions, and record maintenance show higher rates of full delegation. That is where checklists and structured inputs allow safe templates and repeatable flows.

Grading is the flash point

Anthropic’s analysis flags that grading related conversations are less frequent overall, but when they occur a large share shows full delegation patterns. This is the risk area. Institutions need explicit guardrails before usage normalizes by default.

What the chart actually tells you

Augmentation wins in student facing work

University teaching and classroom instruction shows a large majority of augmentative patterns. Educators keep the loop closed and use AI to draft materials, generate examples, and iterate on explanations.

Automation clusters in repeatable processes

Managing finances, maintaining records, and handling admissions trend toward directive automation. These activities benefit from templates, validation rules, and audit trails.

The signal is consistent with Anthropic’s wider economic index

Across occupations, Anthropic has repeatedly measured a tilt toward augmentation over automation. Education mirrors that pattern but with sharper boundaries between front office and back office work.

Practical guardrails for schools and departments

Publish a clear grading policy

Define where AI can assist with feedback and where human evaluation is mandatory. Require instructors to disclose if AI contributed to rubric feedback. Spot check outcomes and keep a record of checks.

Separate student facing use from back office automation

Treat lesson design and mentoring as augmentation first. Treat admissions and finance as automation with approvals and logs. Build different controls for each lane.

Make provenance non negotiable

Store prompts, datasets, and generated artifacts with versioning. Keep a change log so reviewers can reconstruct decisions. Tie records to your retention policy.

Train for failure modes

Hallucinations, template drift, and silent policy violations are the real risks. Use validation steps, red team prompts for sensitive flows, and run shadow tests before scaling.

Measure what leaders care about

Time saved on preparation. Consistency of materials. Ticket resolution time in student support. Quality of feedback confirmed by sampling. Report these quarterly without time promises or delivery claims.

Methodology context you should know

Anthropic analyzed about seventy four thousand conversations associated with higher education email domains over a defined window. They mapped chats to educator tasks using the O*NET taxonomy and split interactions by augmentation versus automation. The authors note limits such as platform specificity, early adopter bias, and the challenge of inferring educator identity from chat data. Treat the findings as descriptive signals, not a census.

Bottom line

AI is already part of academic work. The pattern is clear. Augment the work that requires context and judgment. Automate the repeatable back office flows. Keep grading inside a governed loop with transparent records. Do this and you get real efficiency without crossing lines that damage trust.